Spark Command: /usr/lib/jvm/default-java/jre/bin/java -cp /usr/lib/spark/conf/:/usr/lib/spark/jars/* -Xmx1g .master.Master -host 192.168.0.102 -port 7077 -webui-port 8080 You would see the following in the log file, specifying ip address of the master node, the port on which spark has been started, port number on which WEB UI has been started, etc. Starting .master.Master, logging to /usr/lib/spark/logs/. Goto SPARK_HOME/sbin and execute the following command. Replace the ip with the ip address assigned to your computer (which you would like to make as a master).

# - SPARK_MASTER_PORT / SPARK_MASTER_WEBUI_PORT, to use non-default ports for the master # - SPARK_MASTER_HOST, to bind the master to a different IP address or hostname Spark-env.sh # Options for the daemons used in the standalone deploy mode Part of the file with SPARK_MASTER_HOST addition is shown below: Make a copy of spark-env.sh.template with name spark-env.sh and add/edit the field SPARK_MASTER_HOST.

Note : If spark-env.sh is not present, spark-env.sh.template would be present. Edit the file spark-env.sh – Set SPARK_MASTER_HOST. SPARK_HOME is the complete path to root directory of Apache Spark in your computer.Ģ. Navigate to Spark Configuration Directory. Execute the following steps on the node, which you want to be a Master.ġ. If you have any issues, setting up, please message me in the comments section, I will try to respond with the solution.To Setup an Apache Spark Cluster, we need to know two things :įollowing is a step by step guide to setup Master node for an Apache Spark cluster.

#Install spark on windows 10 without hadoop how to

In summary, you have learned how to install Apache Spark on windows and run sample statements in spark-shell, and learned how to start spark web-UI and history server. $SPARK_HOME/bin/spark-class.cmd .history.HistoryServerīy default History server listens at 18080 port and you can access it from browser using Spark History Serverīy clicking on each App ID, you will get the details of the application in Spark web UI. logDirectory file:///c:/logs/pathĪfter setting the above properties, start the history server by starting the below command. You can enable Spark to collect the logs by adding the below configs to nf file, conf file is located at %SPARK_HOME%/conf directory. History server keeps a log of all Spark applications you submit by spark-submit, spark-shell. On Spark Web UI, you can see how the operations are executed. Spark Hello World Example in IntelliJ IDEAĪpache Spark provides a suite of Web UIs (Jobs, Stages, Tasks, Storage, Environment, Executors, and SQL) to monitor the status of your Spark application, resource consumption of Spark cluster, and Spark configurations.You can continue following the below document to see how you can debug the logs using Spark Web UI and enable the Spark history server or follow the links as next steps This completes the installation of Apache Spark on Windows 7, 10, and any latest.

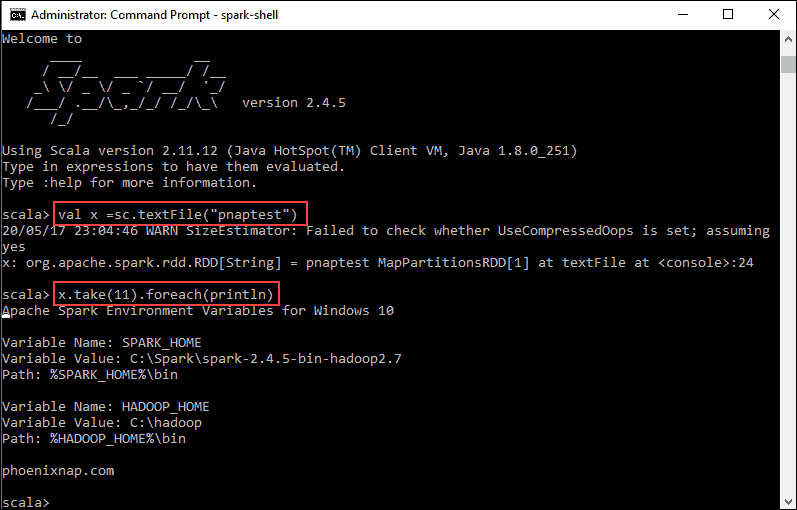

Rdd: .RDD = ParallelCollectionRDD at parallelize at console:24 Spark-shell also creates a Spark context web UI and by default, it can access from On spark-shell command line, you can run any Spark statements like creating an RDD, getting Spark version e.t.c